The Terminator. I, Robot. Ex Machina. These are just a few of the multitude of popular movies that explore the relationship between humans and robots. It is clear that we are all fascinated by (and in some cases, fearful of) the idea that Artificial Intelligence could advance enough to allow robots to emulate human emotions, motivations, and responses. With resources such as NI’s LabView software driving engineering education forward, it seems as though each year brings us new and groundbreaking advancements. In 2022, exactly how close are we to achieving the intelligent humanoid robots of the future?

When it comes to bringing the humanoid robots found in fiction into reality, companies such as Hanson Robotics, Engineered Arts, and Boston Dynamics are at the forefront of the conversation. In recent years, Hanson Robotics’ lifelike robot Sophia has made headlines as a representation of the human-like possibilities of AI. Just this year, Engineered Arts debuted Ameca, their newest robotic model that boasts incredibly realistic facial expressions and gestures. Boston Dynamics, on the other hand, has placed their focus more on movement and speed than appearance and speech. In 2021, they released video of two of their Atlas robots displaying advanced agility and balance by running and jumping through a parkour course.

Sophia, the Robot Celebrity

Invented and developed by David Hanson of Hanson Robotics, Sophia was first activated in February 2016. When compared to the Hong Kong-based company’s earlier robotic models, Sophia was the most advanced with much more human-like reactions and conversational capabilities.

The cognitive software and AI system that powers Sophia was developed by Hanson Robotics, as well as the artificial “skin” that covers its face and arms. Their hope for this “holistic” approach to robots as complete synthetic organisms is that they would grow in goal-oriented logic, cognizance, and more natural conversation. Sophia is described by its creators as having “hybrid human-AI intelligence”. The text-to-speech engine from the company CereProc lends itself to Sophia’s voice synthesis capabilities, allowing it to not only speak, but sing. This was demonstrated as a duet with Jimmy Fallon on The Tonight Show in 2018. Sophia was also notably given citizenship in Saudi Arabia in 2017.

Two years ago, Hanson Robotics unveiled Sophia 2020, which is equipped with the movement and speech tools required for both corporate and scholarly applications. Sophia 2020 has sensors capable of body tracking and face recognition, and has a large variety of physical contact activities that can be automated. As a robot designed for use in either healthcare / service or research, Sophia 2020 is incredibly customizable in both appearance and programming.

Ameca, the Blank Slate

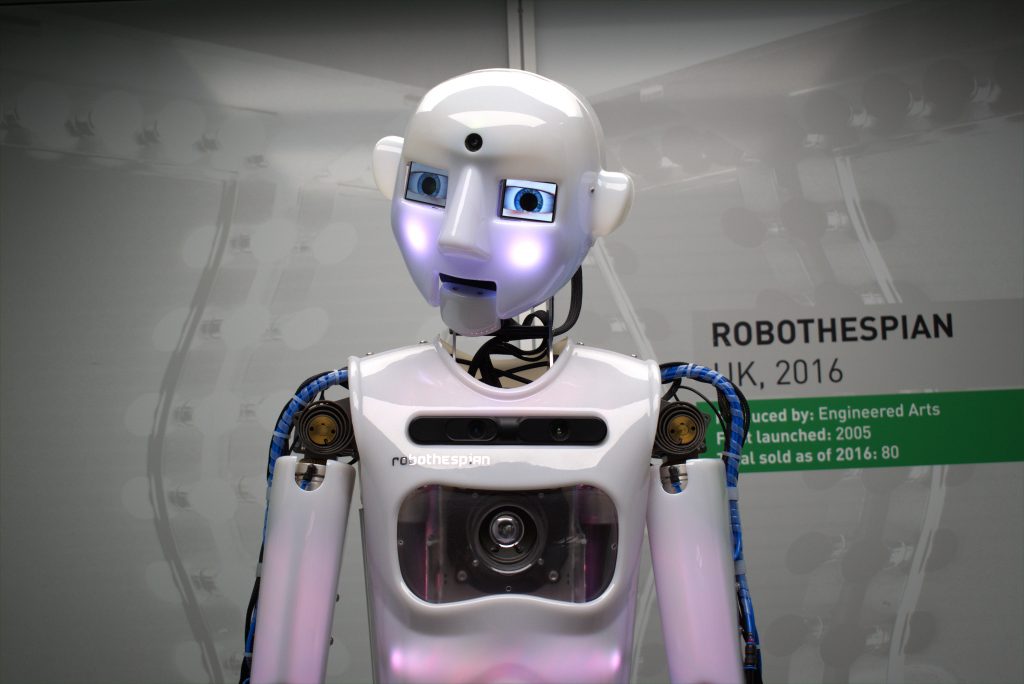

While Hanson Robotics is continually innovating their robots to complete hands-on service tasks to assist in healthcare or corporate fields, the UK-based company Engineered Arts centers their focus on humanoid robots for entertainment. Their Robothespian robot, pictured above, is most often used to draw large crowds at events and exhibitions. While Engineered Arts does have Mesmer, (robots that are incredibly human and lifelike in their appearance), their newest robot was made specifically to combat the effects of the “Uncanny Valley”.

This robot is Ameca. While its body is composed of circuitry and hardware that’s meant to be shown off, it features gray “skin” on its face and hands that was developed by the company. It is run on a software framework called Tritium, which was developed in-house at Engineered Arts over a decade ago. In a video demo released in December 2021, Ameca’s range of motion and incredibly human gesture articulation were put on display. Many viewers thought the fluidity with which Ameca moved its face and limbs seemed almost like CGI.

Ameca is truly made to be a blank slate for the user. While it does use AI software or automated speech recognition (through the Google Cloud Speech-to-Text API), Ameca’s primary objective is to serve as a platform to further the development of AI. It is also modular by design, making future upgrades or customizations easy for the user.

Atlas, the Mobile Marvel

While Sophia and Ameca are capable of groundbreaking facial expressions, gestures, and human-like interactions with people, they both lack the full ability to display one distinctly human trait- walk. This type of bipedal mobility is where the Atlas by Boston Dynamics excels. In a video released in August 2021, two Atlas units can be seen performing incredible feats of balance and athleticism on a parkour course. These advanced controls are thanks to one of the smallest transportable hydraulic systems in the world, which powers Atlas’ 28 hydraulic joints.

While Atlas may not have a humanoid face or artificial skin, it uses AI to employ algorithmic reasoning as its body interacts and moves with the space around it. Sensors are used for depth perception, and trajectory optimization makes Atlas capable of storing complex movement routines into behavioral libraries. This type of AI is referred to by the creators as “Athletic Intelligence”.

The hope for Atlas is that it can be used for commercial applications that will reduce danger or risk of certain activities for humans. However, the Massachusetts-based company operates under strict ethical principles, in which they state that they will not partner with any entities looking to use their robots as weapons or for harm.

Conclusion

The question remains: how close are we to the robot-human relationships we see in our favorite movies? While technology is innovating at an incredible rate, the difference between robots and AI is key. While robots are becoming more articulated, mobile, and overall more humanoid: the computer coding capabilities behind the AI that powers them is not at the level of human consciousness…yet. While we cannot yet have a conversation with a sentient robot (à la Dave in 2001: A Space Odyssey), we are capable of working together to advance the technology that allows us to understand both robots and humans better.

Additional Information

https://www.hansonrobotics.com/

https://www.engineeredarts.co.uk/

https://www.bostondynamics.com/