This is undoubtedly not the first blog post or article about ChatGPT that you have read. Since its initial release just a few months ago in November 2022, Open AI’s massive language model has exploded in popularity. The possible applications for ChatGPT seem endless, and companies and individuals alike are asking the fill-in-the-blank question with a million answers: “Can ChatGPT help me _____?”.

EDITOR’S NOTE: Since the publication of this blog post, NI announced its newest AI assistant, NIGEL, at NI Connect 2023. NIGEL is made for coding within LabVIEW, and is capable of controlling hardware and creating graphical code. Learn more about it in our Day 2 Keynote blog post.

While ChatGPT is connected to the internet in order to receive and send information, it cannot perform searches in real-time as a search engine can. Instead, it relies on user input data to “train” it on a variety of subjects. ChatGPT Plus is the current, paid version of the module- and it uses the more advanced GPT-4, which was trained using Microsoft Azure AI Supercomputers. Because of the exponential influx of user activity since its release, ChatGPT’s knowledge is vast… but it is far from perfect.

To be clear, ChatGPT’s abilities are nothing short of amazing. It is eloquent with its responses and it is capable of conversation eerily similar to a human. The accuracy of its general knowledge is incredibly helpful when trying to find a quick answer. However, the true strength of ChatGPT lies in its use as a tool, not as a solution. With the right queries and direction, the language model is capable of providing an incredible starting point for almost any project.

ChatGPT and LabVIEW

One of the biggest hurdles users face with their NI hardware is coding LabVIEW programs to execute tests. Since ChatGPT boasts programming and coding skills as one of its useful strengths, many people have been putting it to the test with a variety of coding languages.

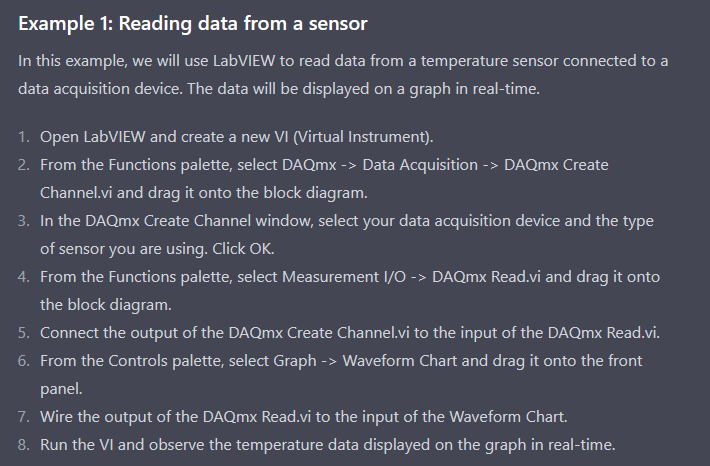

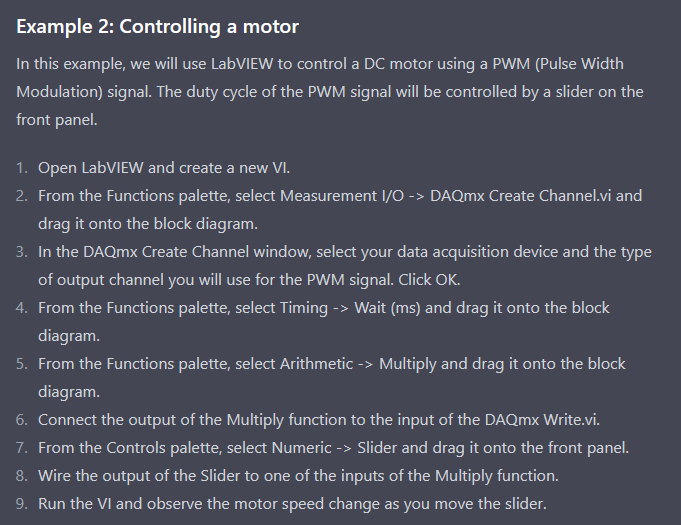

With its graphical user interface, LabVIEW brings a unique challenge to the text-based ChatGPT. When we asked if it could write some code in LabVIEW for us, it initially gave us two examples of common LabVIEW programming tasks. (Note: all of our interactions with ChatGPT for this post were made using the free version, ChatGPT-3.5).

As to be expected, ChatGPT does well when answering with information from well-documented procedures such as the ones above. But how would it do with writing actual code?

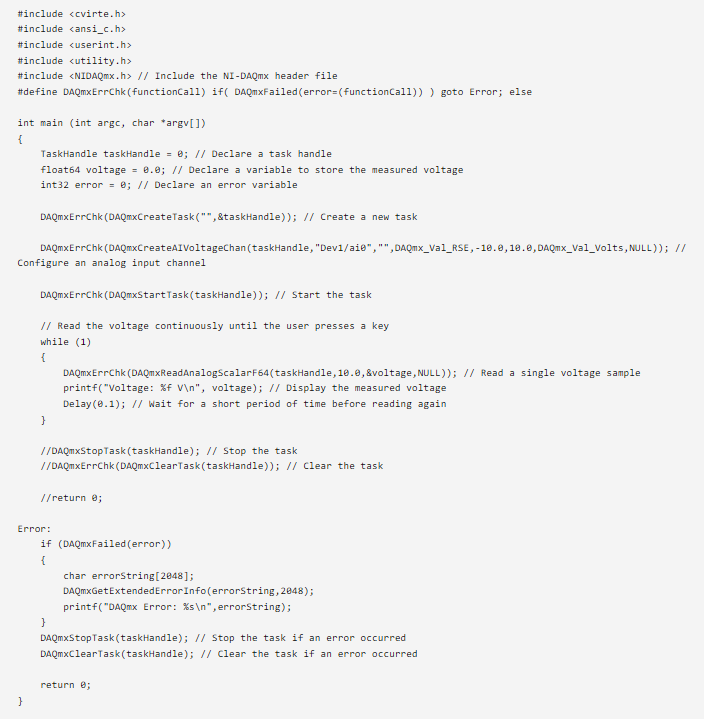

To find out, we ran a brief test. As with every good experiment, we had a control group. We used a snippet of some C code to read voltage from a DAQ device in LabWindows/CVI. It looked like this:

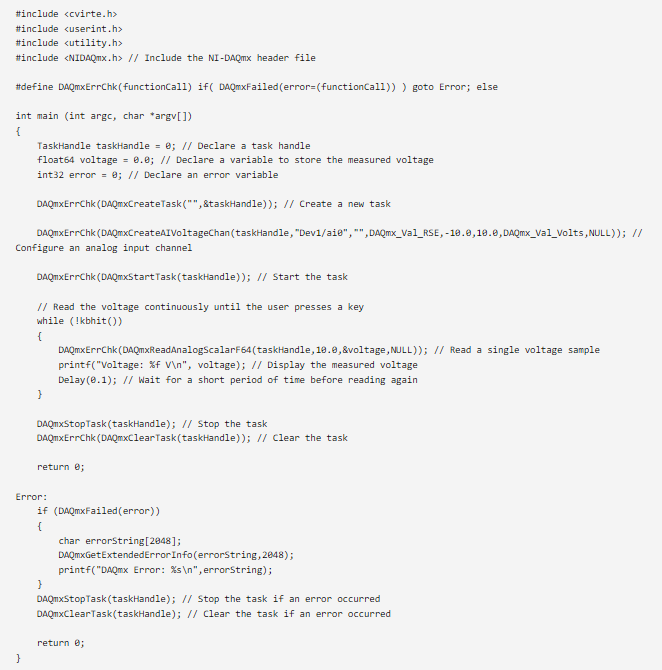

We asked ChatGPT to write some code for the same purpose, using the inquiry “can you write code for reading voltage from a data acquisition device in LabWindows/CVI?”. The response was completely confident. This is what ChatGPT’s code looked like:

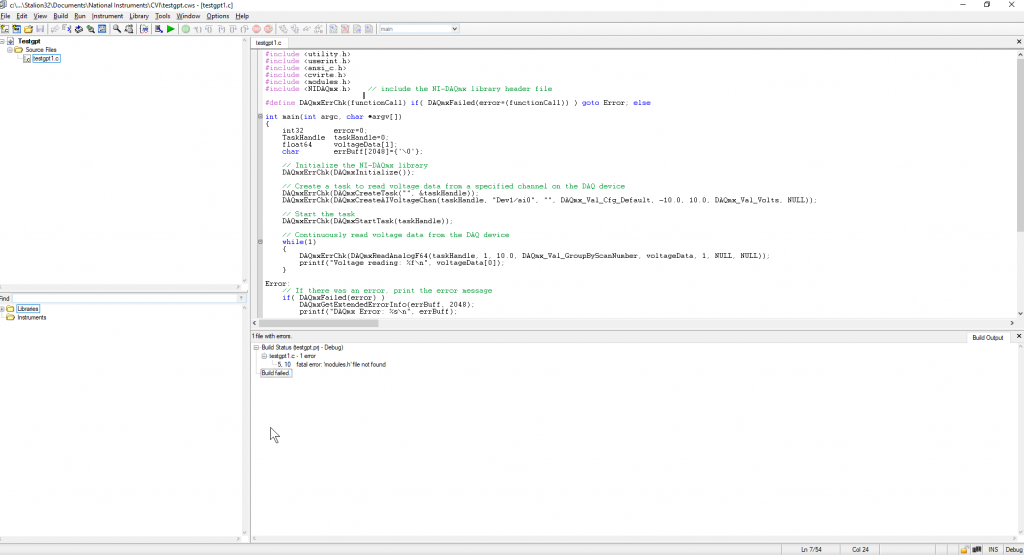

A few noticeable differences right off the bat, but would it run? We ran it through LabVIEW to see if it would work.

The first error the code ran into was trying to locate the nonexistent “modules.h” file. We removed the “#include” command for that file to see what would happen.

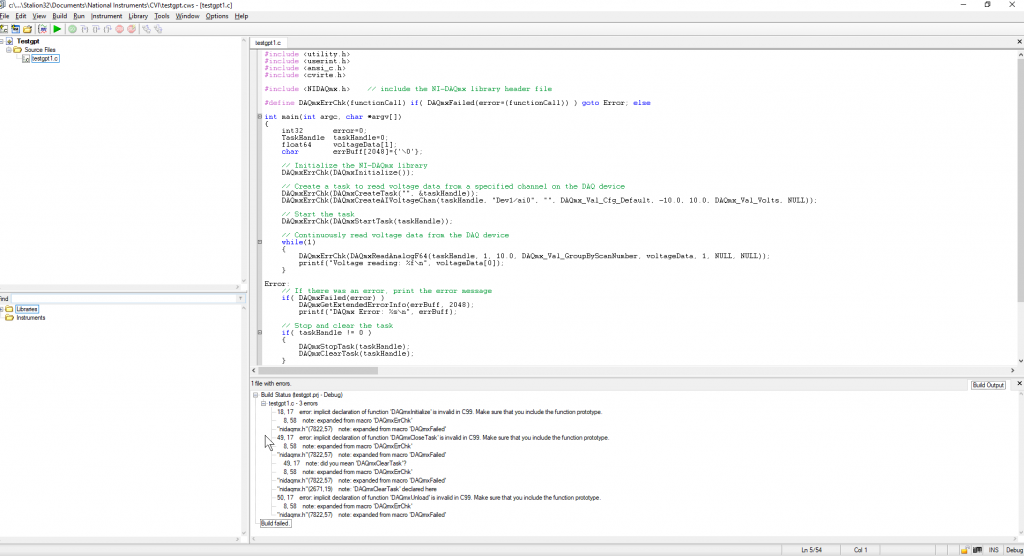

The result? Even more errors.

Conclusion

Clearly, our experiment was a quick one. We could spend much more time testing different queries, clarifying with more information, or even continuing the conversation with ChatGPT and asking for specific fixes, possibly leading to a different result. However, the conclusion would most likely remain the same: ChatGPT is an amazing tool, but it must be utilized correctly. The code ChatGPT gave us, while imperfect, would have saved us a lot of time and given us a major head start in our programming endeavors- but it takes knowledge of what went wrong to address the issues.

While ChatGPT provides unprecedented levels of competence, it is not a replacement for human understanding. While it may not (yet) be a threat to a programmer’s job title on its own, it can certainly be an incredible asset for debugging, troubleshooting, or saving valuable time.